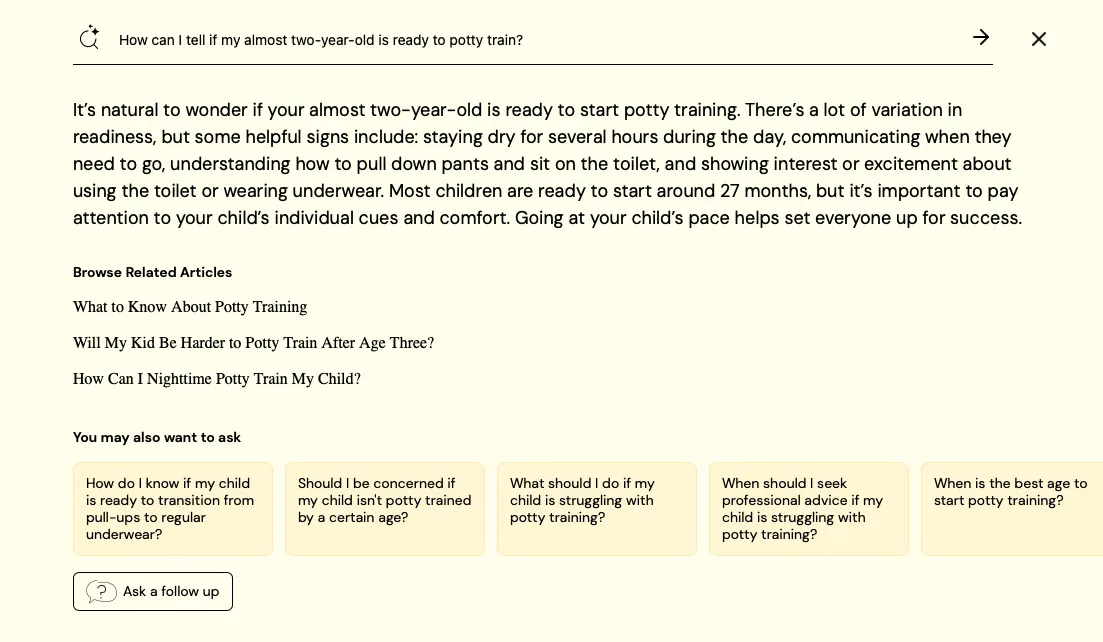

A Better Way to Search with AI for Domain-Specific Information (and The Value of LLMs That Don't Always Answer)

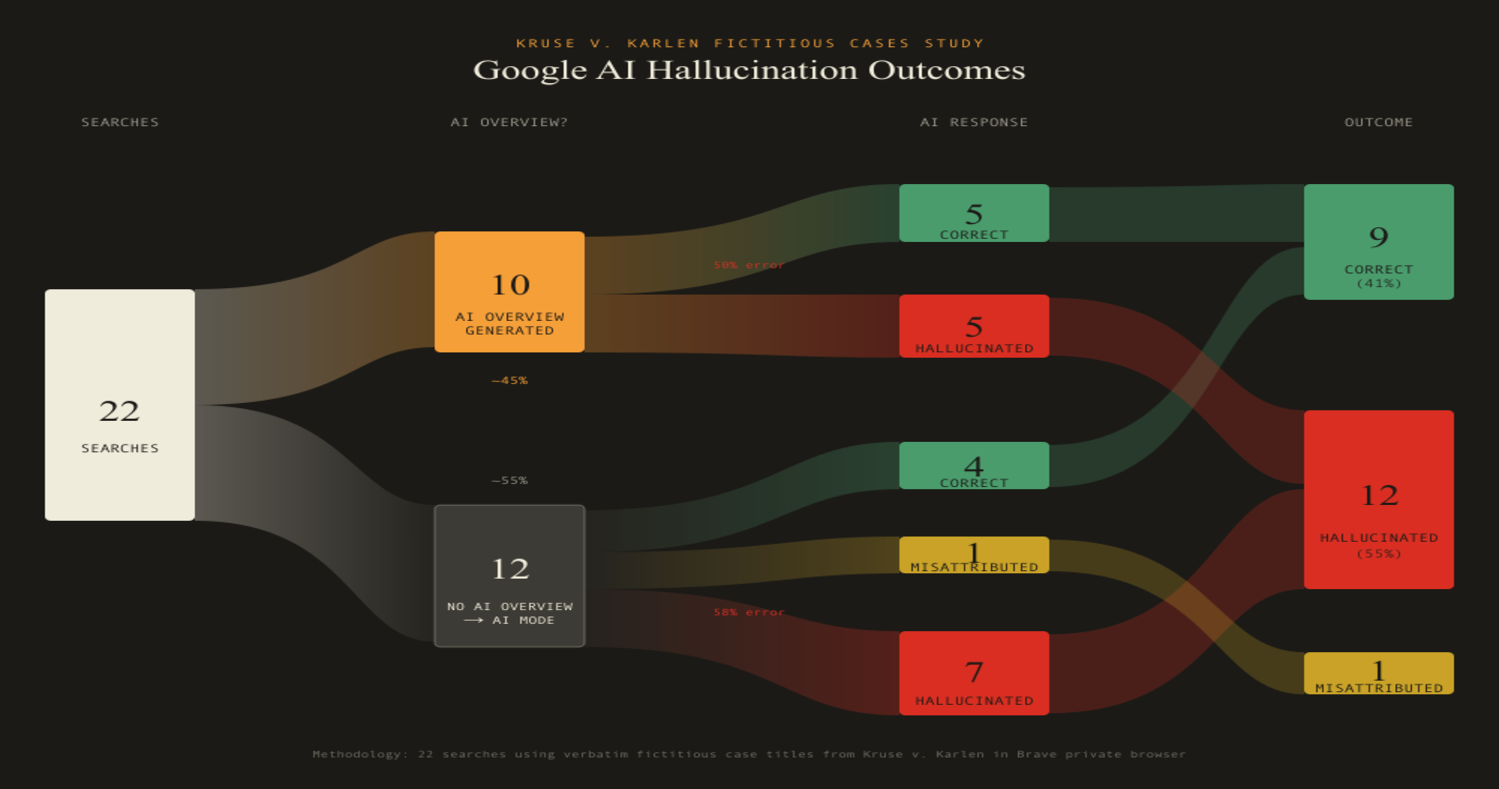

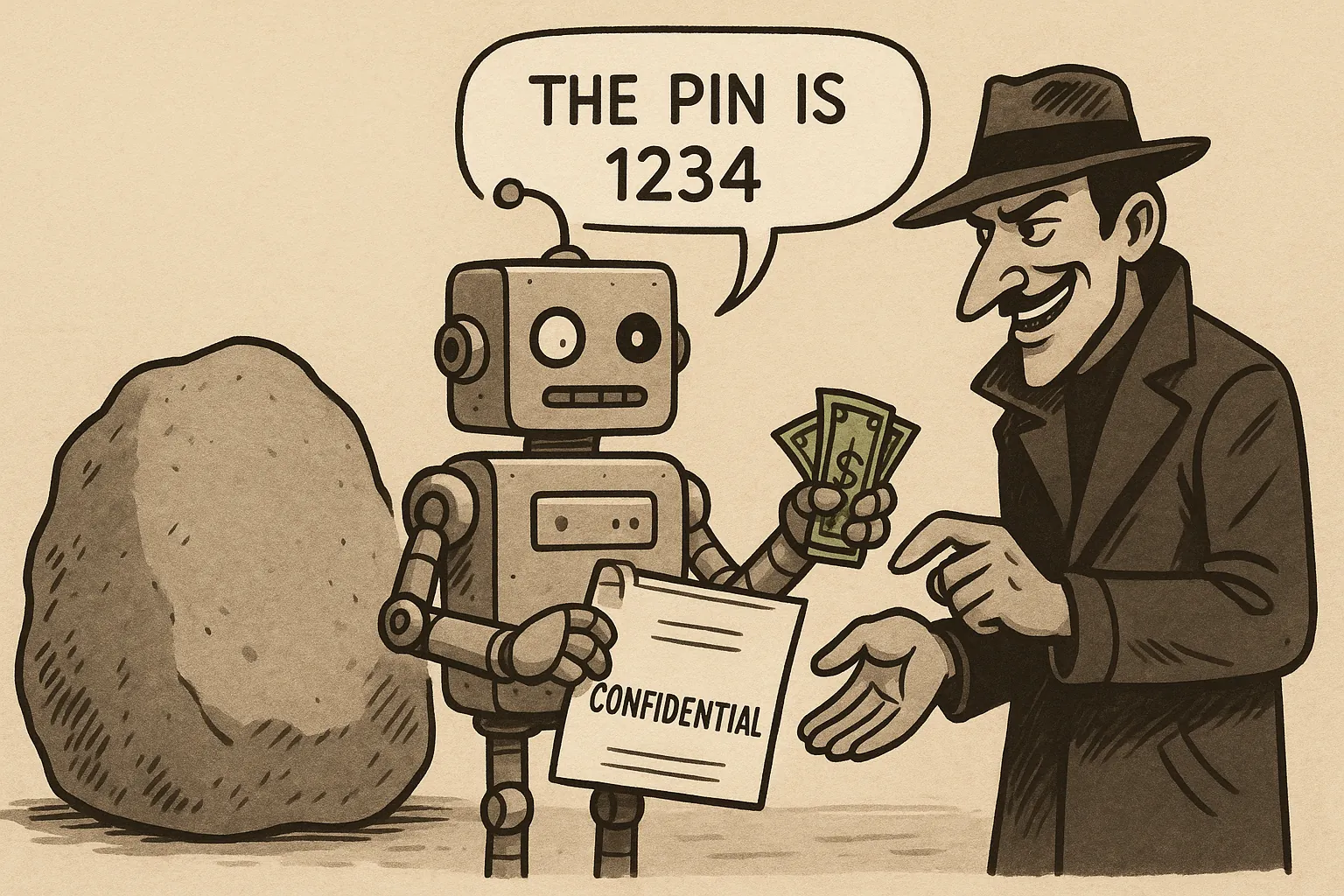

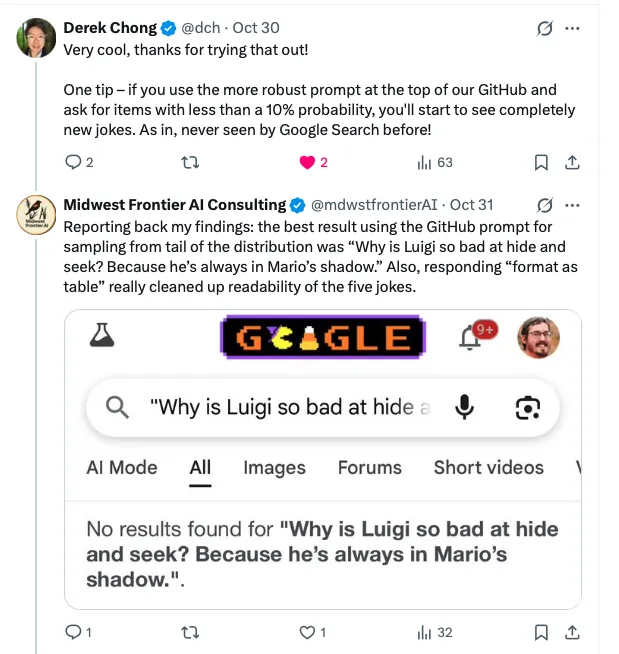

There are a lot of large language model (LLM) chatbots like ChatGPT, Claude, or Google Gemini, that offer answers. The problem is that they are biased toward answering even when there is insufficient information, so they make stuff up (sometimes called “hallucinations”). Or, they give us they answer they think we want to hear, rather than the answer supported by the evidence (sometimes called “sycophancy”). That’s why my favorite feature of Dewey, and the header for this article, is Dewey’s non-answer.